Hi! I'm Brandon,

I am a Mixed-Methods Researcher at my core, with multidisciplinary skills spanning user experience, education, game design and software development.

With a solid foundation in HCI and experimental methodologies, I offer a unique, user-focused perspective in both research and development roles.

My hands-on industry experience across various environments is complemented by strong communication skills and a passion for integrating multiple disciplines.

This is a collection of my professional experience and original research.

Several projects I made to help speed up language learning.

Projects include:

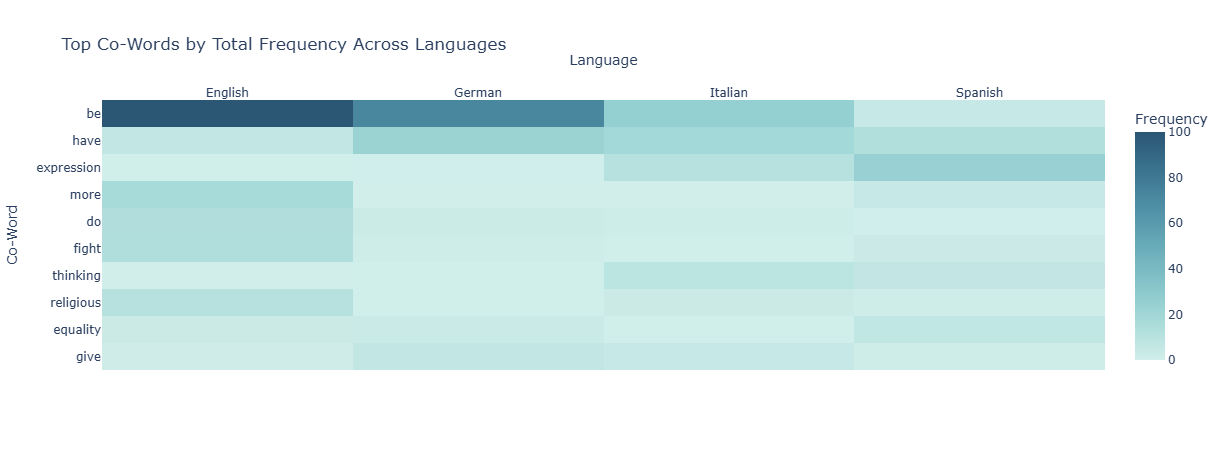

An exploration of what "Freedom" means in different cultures, via their languages. Includes a brief study with native-speakers.

Python, Sentence Transformers, Spacy, Selenium, Plotly, Dash

Feel & Play is a musical app for mobile that helps children develop emotional intellgence skills

Lead developer, data analyst & user researcher

Unity, C#, SQL, Observation studies, Unity Analytics, Surveys

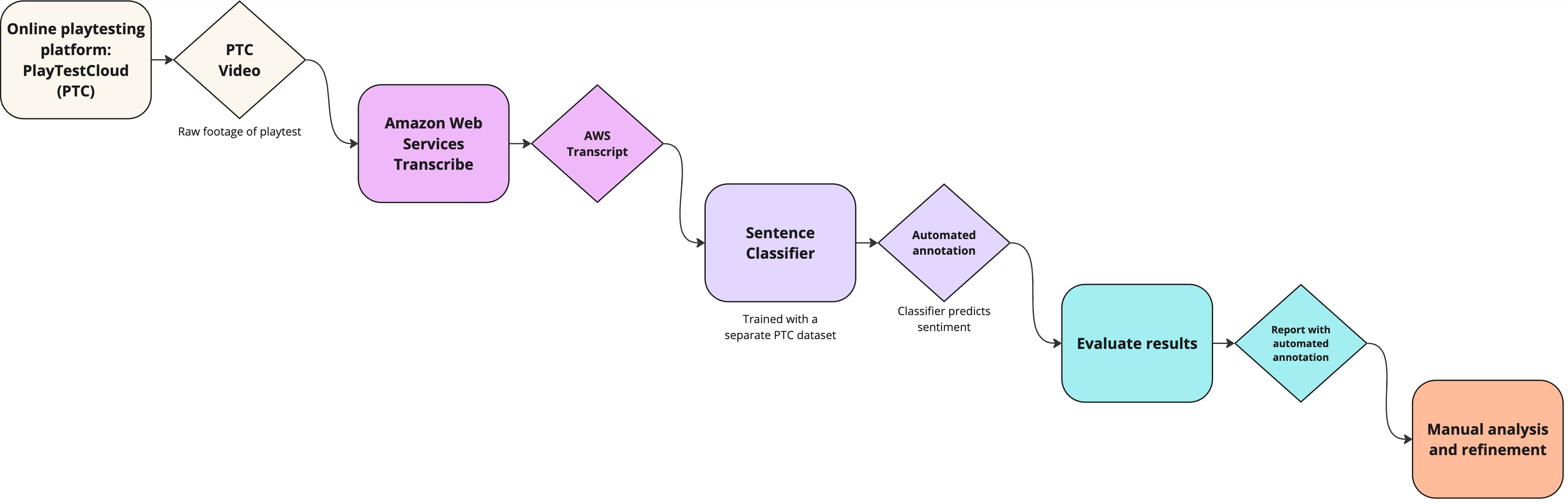

Developed for my masters thesis, this program was designed to improve the user research process at HypeHype. Compared in a study against a manual approach, it was shown to greatly increase the speed of insight-collection, allowing a larger turnover of user tests.

This program uses neural language models to automatically classify, label and highlight areas of interest to the games user researcher.

Developed using Python and Hugging Face language models, while I was working at HypeHype mobile game company as a Player Analyst.

In brief, remote playtesting usually requires the manual review of tens of hours of footage, and we wanted to expedite that process.

Transcripts were generated from playtest videos, which were then analyzed by a sentence classifier trained with manually labelled data and the classified results (in the form of video timestamps) are then passed to the user researcher, sorted by sentiment and intensity.

Scale or Be Scaled is a competitive split screen game, where players race to see who can balance their scale the fastest.

Programmer, project management, designer

Unity, C#, Notion, Kanban

A FPS with fluid movement, wall-running and frantic race up a tower against the clock

UX researcher, Audio programmer, UI programmer

Moderated playtests, Surveys, Usability testing, Unity, C#, Jira